4 min read

12 Google London Calling

Two days inside Google Cloud's AI Innovators Expedition.

This post took longer to publish than it should have. A week of agentic coding in London and I needed a bit of a break.

Google invited around thirty companies to London for two days on agents, multimodal AI, and governance. The room included Wallapop , Typeform , JobandTalent , Fever , Freepik , RavenPack , eDreams , and Luzia . Two days of presentations, hands-on sessions, and an agent-building competition.

Day 1 morning I presented what we are working on at Luzia - Nexo, a way for external agents to connect into our platform and reach our users. This was the soft launch. Between sessions I was coding and deploying it live, adapting what I was building to what I was hearing. Plenty of bugs and glitches that I was fixing as I found them, but that is fine at this stage. The lure of coding with a team of agents had me with my laptop open in the Uber on the way to meet my sister for dinner.

What I Learnt

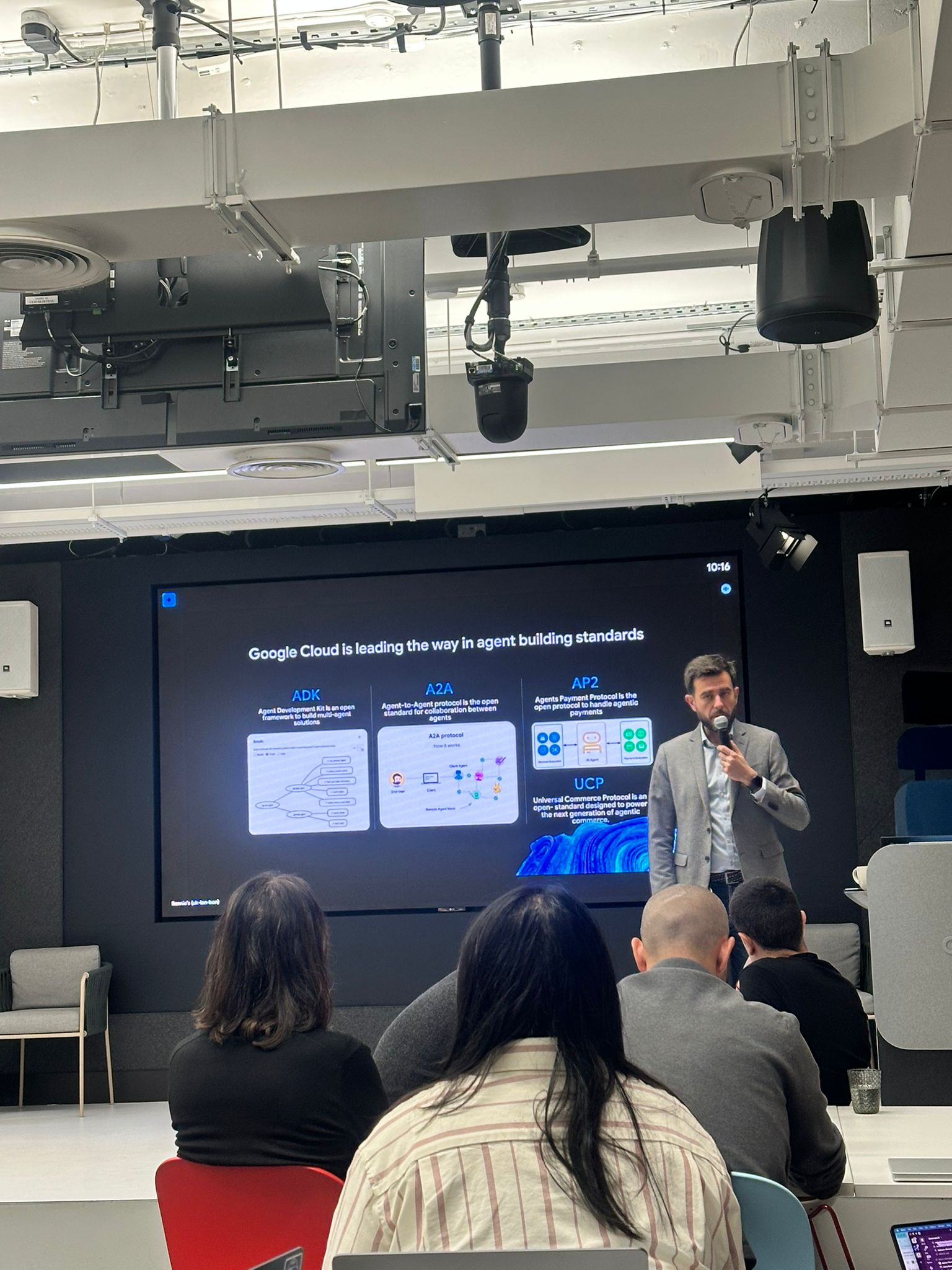

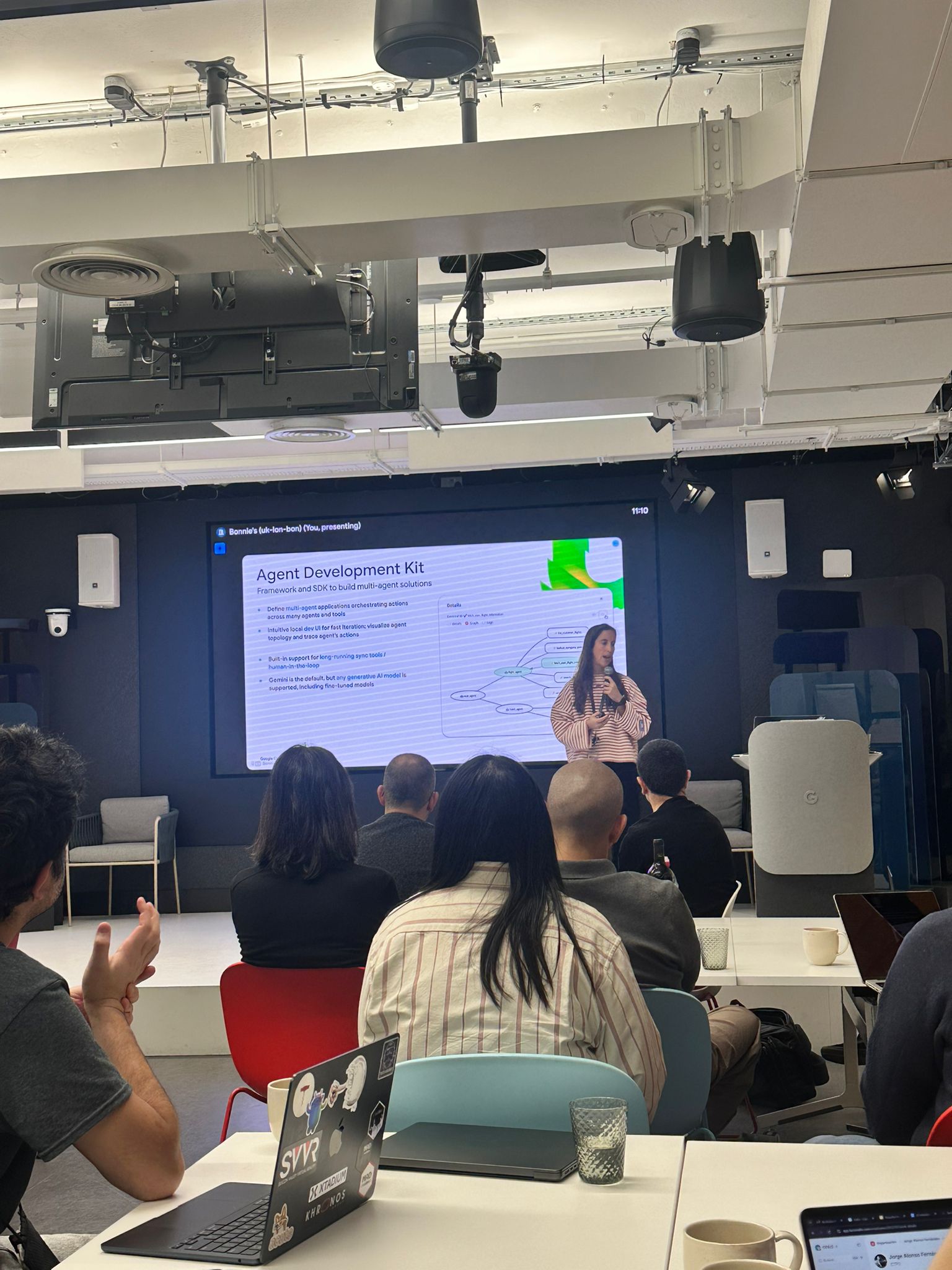

The sessions that stuck with me: A2A (Agent-to-Agent) and ADK (Agent Development Kit) are the two protocols I think will matter most. A2A defines how agents find and coordinate with each other. ADK gives you an open, model-agnostic framework to build them. There were also sessions on agentic payments (AP2) and commerce (UCP) - early days, but the direction is clear.

Clement Farabet , VP AI Engineering at Google DeepMind and previously at NVIDIA, gave a talk that reminded me how much raw research firepower sits behind these products. Joaquin Cuenca , CEO of Freepik, was interesting on how a stock photo company pivots when AI can generate the images. Sergio Guadarrama , Principal Research at Google DeepMind, on getting AI from research into product.

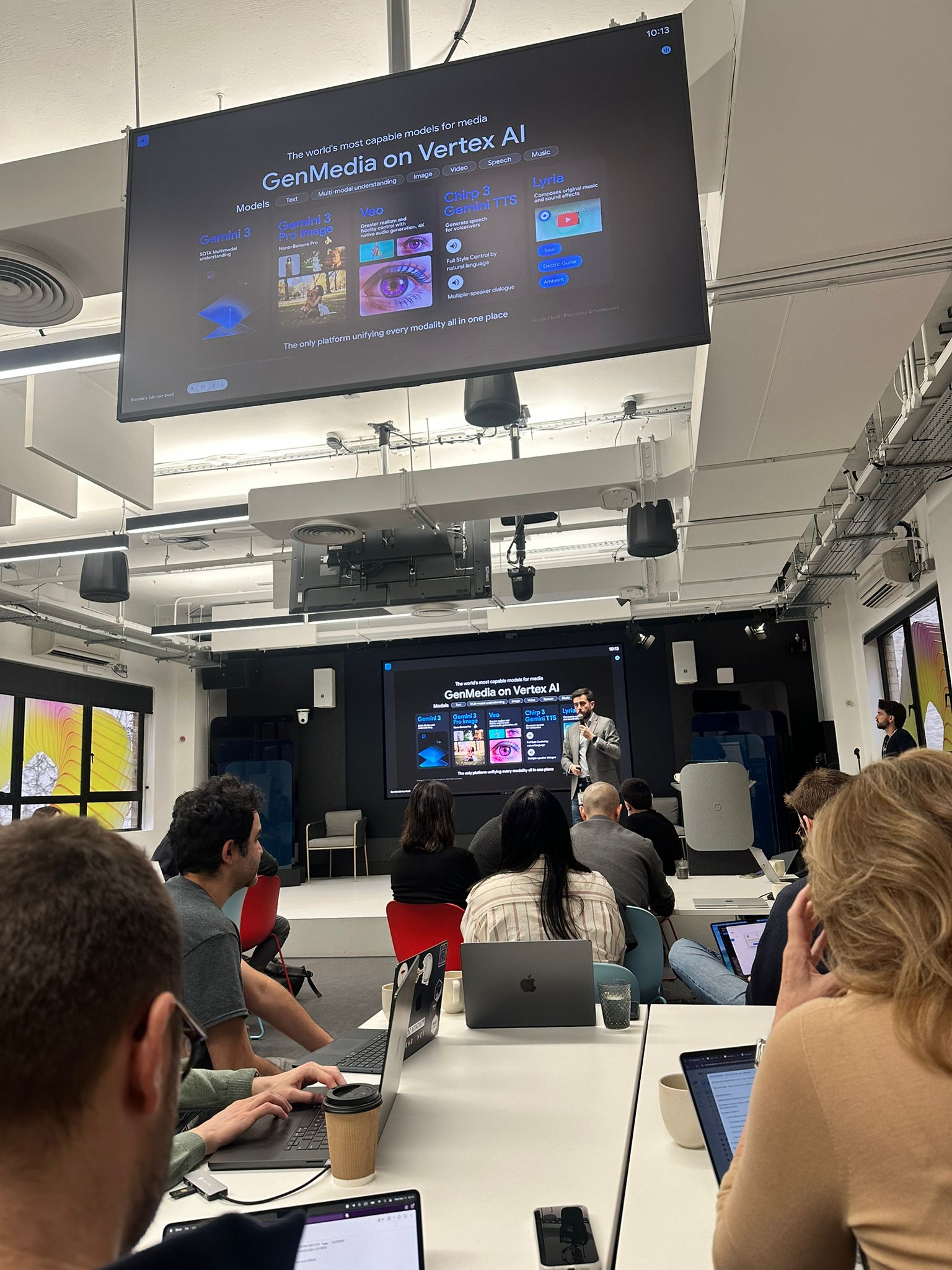

The GenMedia suite is impressive on paper - Veo for video, Imagen for image, Lyria for music, Chirp for speech, all under one platform. We will be running some proof of concepts at Luzia to see what actually works.

Developer Tooling

The agent and multimodal sessions were strong. The developer tooling sessions were where I had more questions than answers. Google has a lot of competing products in flight - the Windsurf acquisition , changes to Firebase , Gemini CLI, Antigravity - and the space is moving fast with Claude Code , Codex , and Cursor . I think Google is playing wait and see, and with this many options they can afford to.

From what I have seen, Gemini is genuinely impressive for UI work - I have history with it, but credit where it is due. The usage limits are still a problem though. Every time I have used it for coding I hit the plan ceiling within 15-20 minutes, and there is no smooth way to upgrade mid-session. Antigravity does not feel differentiated yet - another skin on VS Code when a lot of developers are moving back into the terminal. If I were at Google I would put weight behind something model-agnostic like OpenCode and invest at a higher abstraction level, something closer to Cowork . With the Windsurf acquisition, they probably are.

Google has a lot of really good content on AI-assisted coding. One of the people I follow most closely on this is Addy Osmani , an engineering leader at Google. He won Ireland’s Young Scientist competition in 2003 - the same competition Patrick Collison won two years later before co-founding Stripe. Worth reading if you are thinking about where developer tooling is going.

While everyone else was building agents for the competition, I was in the back row building the thing I had just pitched. Different kind of hackathon.

Thanks to Jorge Gil Pena , a friend who works at Google Cloud, for the invite.